df3 = df.copy()

age_col = "age_years" if "age_years" in df3.columns else "age"

income_col = "income_dollars" if "income_dollars" in df3.columns else "income"

if age_col in df3.columns:

df3["age_group"] = pd.cut(df3[age_col].astype(float), bins=[65, 70, 75, 80, 85, 110], include_lowest=True)

if income_col in df3.columns:

quantiles = df3[income_col].quantile([0, .2, .4, .6, .8, 1.0]).values

edges = [quantiles[0]]

for value in quantiles[1:]:

edges.append(value if value > edges[-1] else edges[-1] + 1)

df3["income_band"] = pd.cut(df3[income_col].astype(float), bins=edges, include_lowest=True)

if set(["age_group", "gender"]).issubset(df3.columns):

print("Distribution 1: Age + Gender")

display(pd.crosstab(df3["age_group"], df3["gender"]))

if set(["health", "gender"]).issubset(df3.columns):

print("Distribution 2: Health by Gender")

display(pd.crosstab(df3["health"], df3["gender"]))

if set(["income_dollars", "gender"]).issubset(df3.columns):

print("Distribution 3: Income by Gender")

display(pd.pivot_table(df3, index="gender", values="income_dollars", aggfunc=["count", "mean", "median"]).round(2))

if set(["income_dollars", "region"]).issubset(df3.columns):

print("Distribution 4: Regional Income")

display(pd.pivot_table(df3, index="region", values="income_dollars", aggfunc=["count", "mean", "median"]).round(2))

if set(["age_group", "income_dollars"]).issubset(df3.columns):

print("Distribution 5: Age-wise Income")

display(pd.pivot_table(df3, index="age_group", values="income_dollars", aggfunc=["count", "mean", "median"]).round(2))

Output

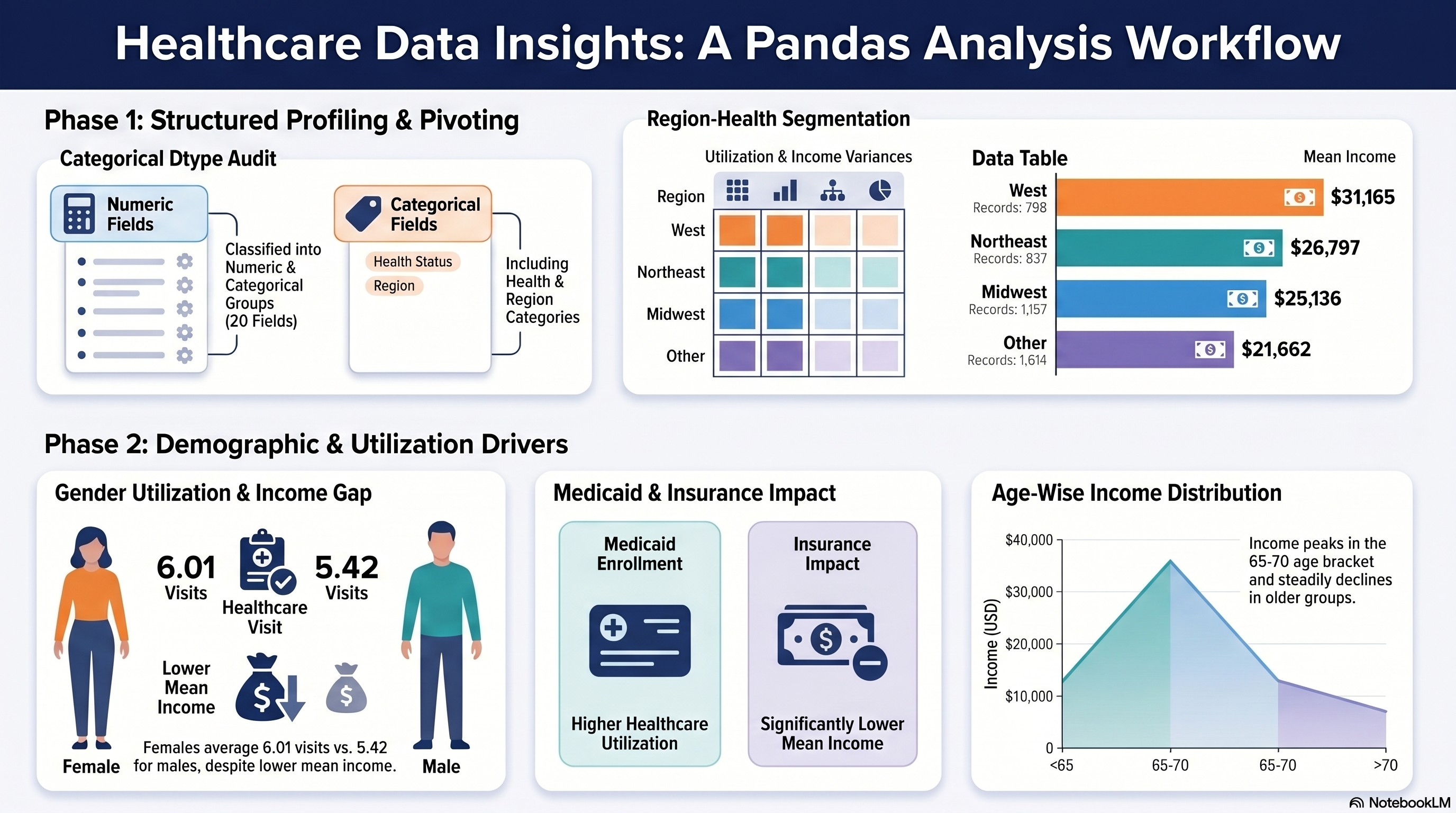

Distribution 1: Age + Gender

gender female male

age_group

(64.999, 70.0] 897 671

(70.0, 75.0] 736 530

(75.0, 80.0] 525 321

(80.0, 85.0] 303 176

(85.0, 110.0] 167 80

Distribution 2: Health by Gender

gender female male

health

average 2093 1416

excellent 193 150

poor 342 212

Distribution 3: Income by Gender

count mean median

income_dollars income_dollars income_dollars

gender

female 2628 22493.48 14160.0

male 1778 29377.16 20574.0

Distribution 4: Regional Income

count mean median

income_dollars income_dollars income_dollars

region

midwest 1157 25136.34 17875.0

northeast 837 26797.09 17413.0

other 1614 21662.84 14220.0

west 798 31165.05 20656.0

Distribution 5: Age-wise Income

count mean median

income_dollars income_dollars income_dollars

age_group

(64.999, 70.0] 1568 27488.76 19872.0

(70.0, 75.0] 1266 26194.35 16986.0

(75.0, 80.0] 846 22997.60 15135.0

(80.0, 85.0] 479 20269.47 12886.0

(85.0, 110.0] 247 23951.36 13940.0