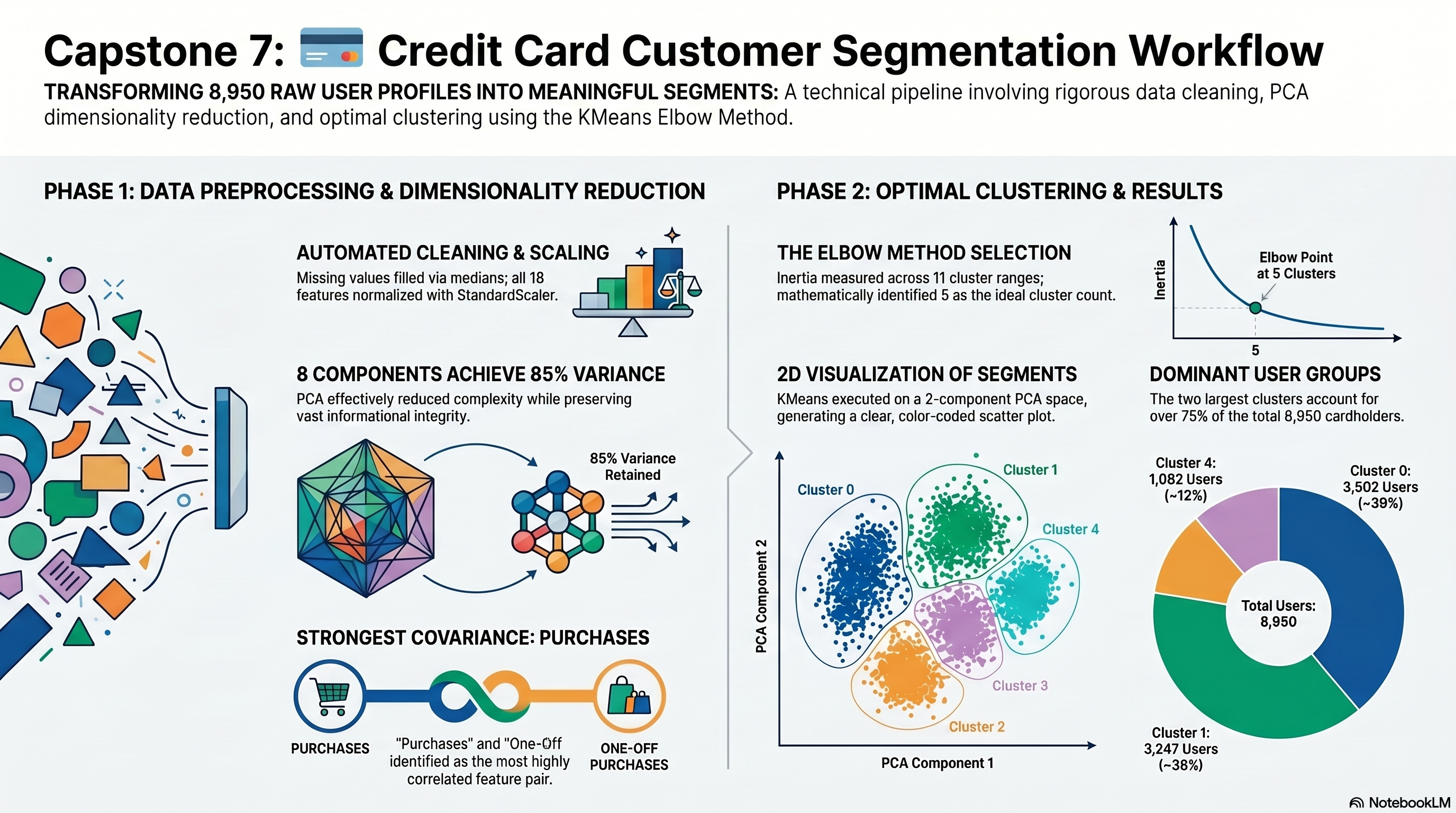

Clean The Credit Card Dataset And Scale The Features

Notebook section: Load, null-handling, and scaling cells

Requirement: Load the dataset, check nulls, handle missing values, and apply StandardScaler before PCA.

The notebook removes the identifier column, fills the missing credit-limit and minimum-payment fields with medians, and scales the remaining numeric feature set for PCA.

- Missing-value counts filled: {"MINIMUM_PAYMENTS":313,"CREDIT_LIMIT":1}.

- Scaling is applied to the full feature matrix before PCA fitting.

working_df = df.drop(columns=['CUST_ID']).copy()

for column in working_df.columns:

if working_df[column].isna().any():

working_df[column] = working_df[column].fillna(working_df[column].median())

scaled = scaler.fit_transform(working_df)