evaluations_df.to_csv(OUTPUTS_DIR / 'session_10_model_comparison.csv', index=False)

summary = {

'source_counts': split_manifest['source_counts'],

'generated_counts': split_manifest['generated_counts'],

'class_indices': class_indices,

'pdf_expected_classes': PDF_EXPECTED_CLASSES,

'available_source_classes': AVAILABLE_CLASS_FOLDERS,

'missing_pdf_classes_from_source': MISSING_PDF_CLASSES,

'source_governance_notes': [

'PDF directions are the source of truth for task sequence and deliverables (Task A, Task B, Task C).',

'GitHub-backed dataset assets are the source of truth for notebook inputs per Project_DEV_Rules_PROMPT.md.',

'When PDF-expected classes are missing from source files, execution uses available classes and records the gap explicitly.',

'The generated train/test split is a fixed stratified sample to keep transfer-learning runs executable on the current CPU-only environment.',

],

'model_results': evaluations,

'best_model': evaluations_df.iloc[0].to_dict(),

'best_model_example_predictions': prediction_examples[best_model_name],

}

with open(OUTPUTS_DIR / 'session_10_summary.json', 'w', encoding='utf-8') as handle:

json.dump(summary, handle, indent=2)

summary

Output

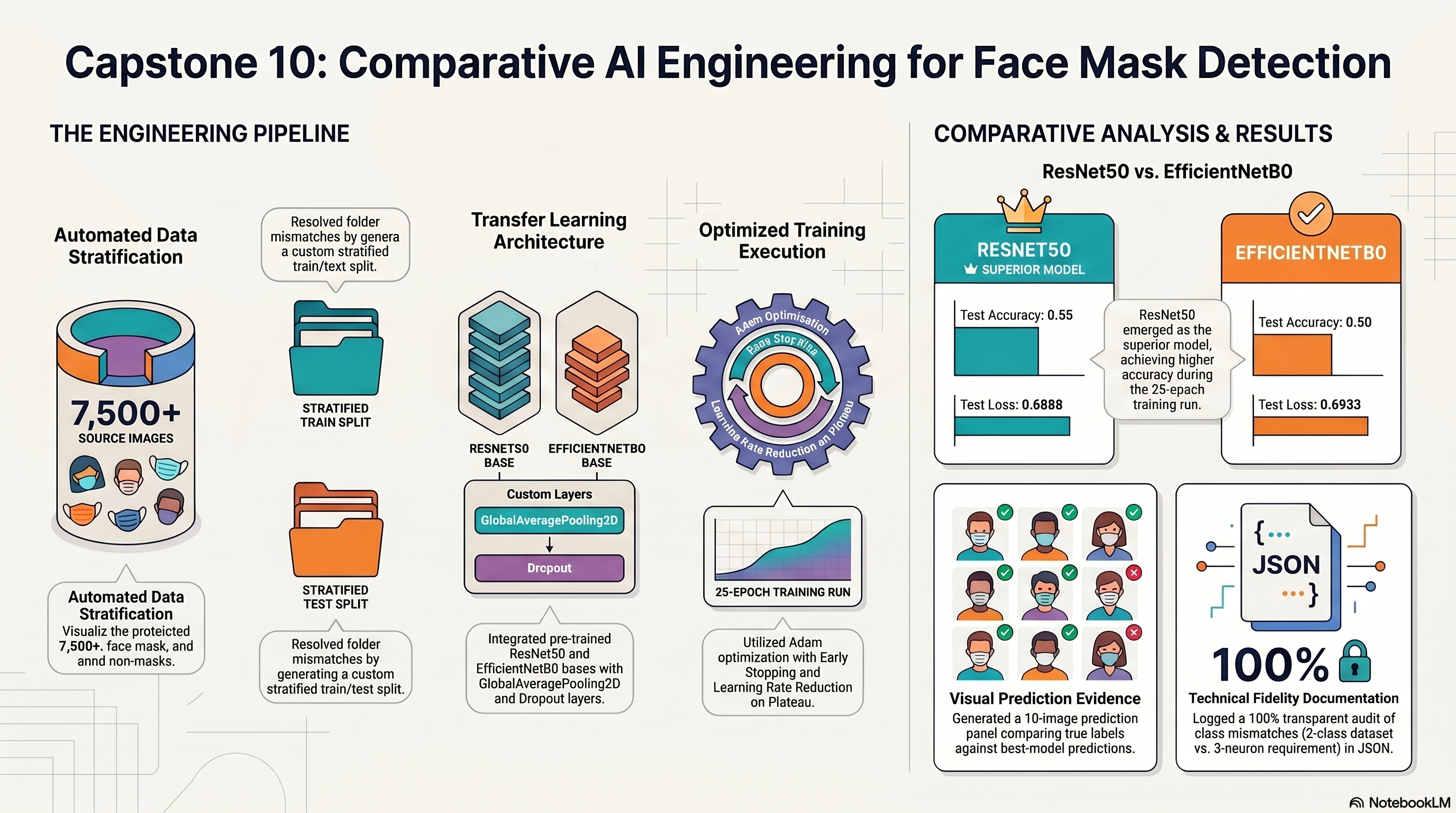

{'source_counts': {'with_mask': 3725,

'without_mask': 3828,

'mask_worn_incorrect': 1815},

'generated_counts': {'with_mask': {'train': 120, 'test': 30},

'without_mask': {'train': 120, 'test': 30},

'mask_worn_incorrect': {'train': 120, 'test': 30}},

'class_indices': {'mask_worn_incorrect': 0,

'with_mask': 1,

'without_mask': 2},

'pdf_expected_classes': ['with_mask', 'without_mask', 'mask_worn_incorrect'],

'available_source_classes': ['mask_worn_incorrect',

'with_mask',

'without_mask'],

'missing_pdf_classes_from_source': [],

'source_governance_notes': ['PDF directions are the source of truth for task sequence and deliverables (Task A, Task B, Task C).',

'GitHub-backed dataset assets are the source of truth for notebook inputs per Project_DEV_Rules_PROMPT.md.',

'When PDF-expected classes are missing from source files, execution uses available classes and records the gap explicitly.',

'The generated train/test split is a fixed stratified sample to keep transfer-learning runs executable on the current CPU-only environment.'],

'model_results': [{'model': 'EfficientNetB0',

'epochs_ran': 15,

'test_loss': 1.0972731113433838,

'test_accuracy': 0.3333333432674408},

{'model': 'ResNet50',

'epochs_ran': 24,

'test_loss': 0.7665861248970032,

'test_accuracy': 0.6555555462837219}],

'best_model': {'model': 'ResNet50',

'epochs_ran': 24,

'test_loss': 0.7665861248970032,

'test_accuracy': 0.6555555462837219},

'best_model_example_predictions': {'filenames': ['mask_worn_incorrect\\1010.png',

'mask_worn_incorrect\\1014.png',

'mask_worn_incorrect\\1059.png',

'mask_worn_incorrect\\1078.png',

'mask_worn_incorrect\\1146.png',

'mask_worn_incorrect\\1220.png',

'mask_worn_incorrect\\1237.png',

'mask_worn_incorrect\\143.png',

'mask_worn_incorrect\\1610.png',

'mask_worn_incorrect\\1631.png'],

'true_labels': ['mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect'],

'predicted_labels': ['mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect',

'mask_worn_incorrect']}}