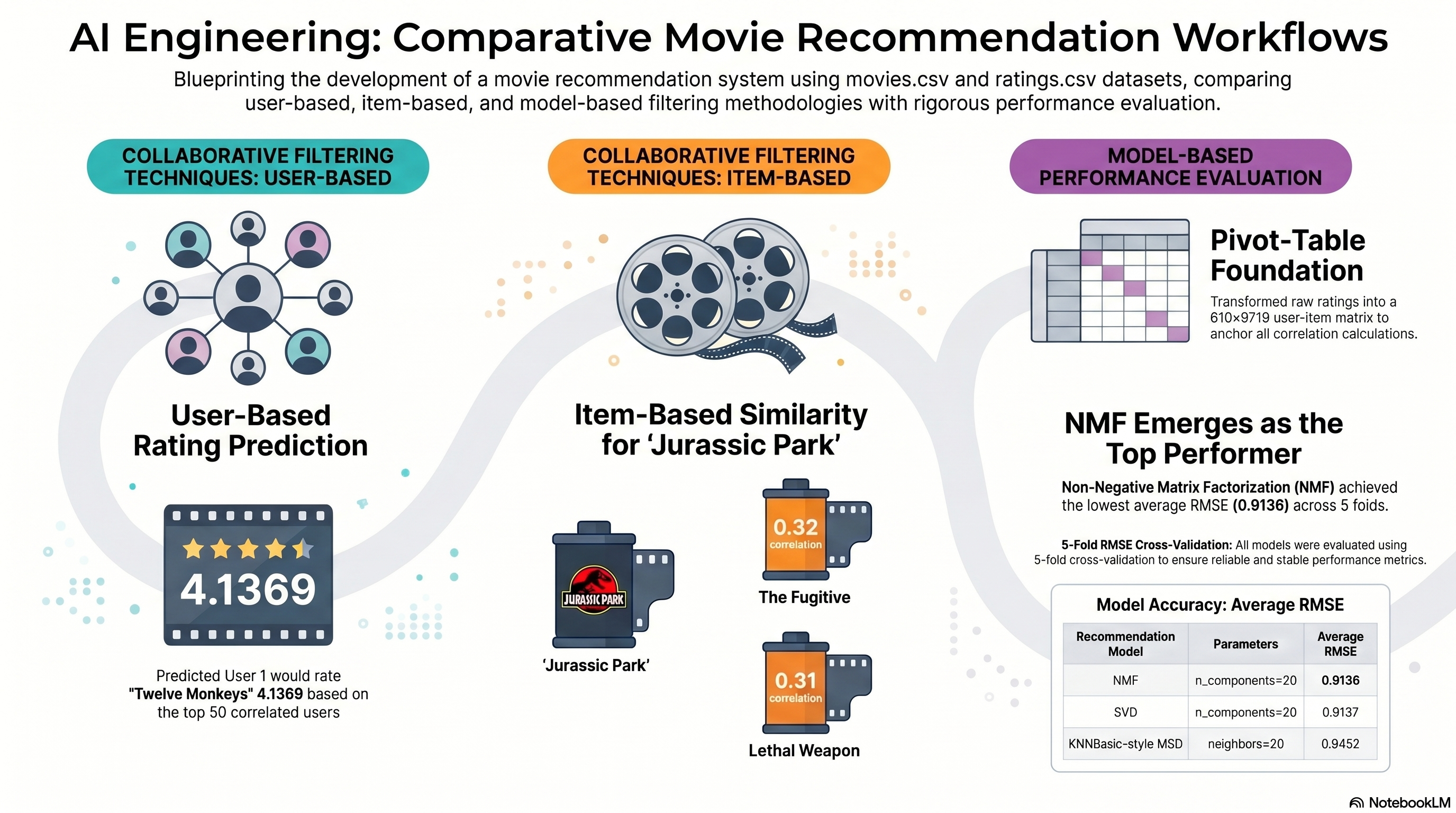

Merge The Ratings Data And Build The User-Item Matrix

Notebook section: Load, merge, and pivot-table cells

Requirement: Load both CSV files, merge on movieId, and create the user-item matrix required for collaborative filtering.

The notebook merges ratings with movie titles and creates the full user-item matrix that anchors the user-based, item-based, and model-based recommendation steps.

- The staged movie with movieId 32 is Twelve Monkeys (a.k.a. 12 Monkeys) (1995).

- User-based and item-based recommendation steps both start from the same merged pivot-table structure.

merged = ratings.merge(movies[['movieId', 'title']], on='movieId', how='left')

user_item = merged.pivot_table(index='userId', columns='title', values='rating')