Capstone 11 Scope

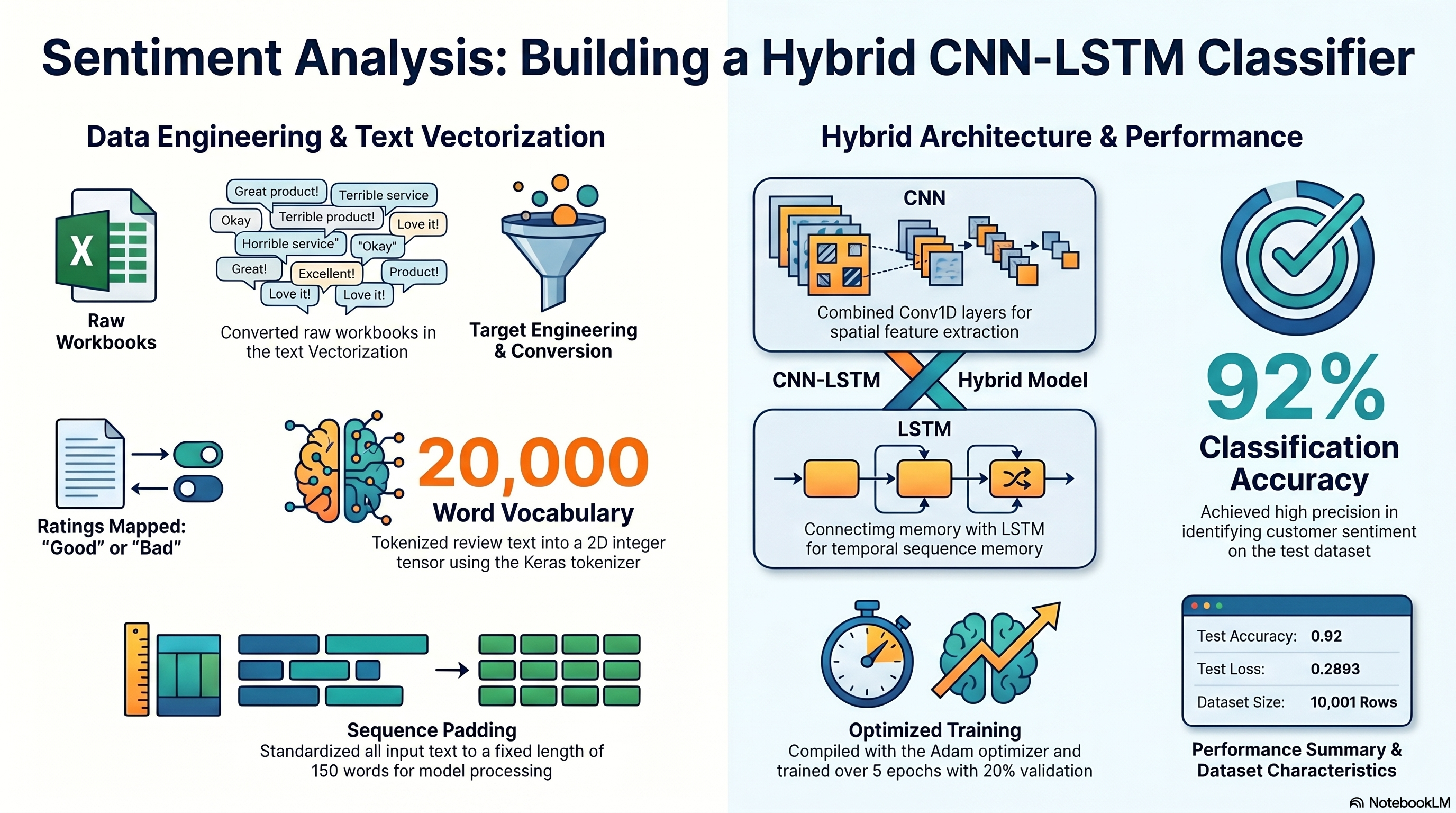

Capstone 11 converts the copied review-classification assignment into an executed CNN-LSTM notebook with a converted CSV handoff, training history, prediction samples, and saved summary outputs.

Primary staged source file: GrammarandProductReviews.xlsx.

The executed notebook converts the workbook to CSV first and preserves that conversion as an output artifact for the site workflow.

Original Project PDF

The copied project directions are embedded here for direct comparison against the notebook and output artifacts.

Requirement Walkthrough

Each walkthrough block maps the copied PDF requirements to the executed notebook cells, exported outputs, and reviewable evidence staged with this capstone.

11a

Load The Workbook And Create The Target Column

Notebook section: Workbook load and target-engineering cells

Requirement: Load the staged review data, reconcile the workbook-versus-CSV mismatch, and create the target column from reviews.rating.

The notebook reads the staged workbook, exports a converted CSV for the site, and creates the binary target the copied PDF describes from reviews.rating.

Results Capture

- Converted CSV artifact: GrammarandProductReviews_converted.csv.

- Target distribution is {"1":9154,"0":847}.

df = pd.read_excel(WORKBOOK_PATH)

df.to_csv(converted_csv_path, index=False)

df['target'] = (df['reviews.rating'] > 3).astype(int)

11b

Tokenize And Pad The Review Text

Notebook section: Tokenizer and sequence-preparation cells

Requirement: Tokenize the text with MAX_NB_WORDS = 20000 and pad the train/test sequences to MAX_SEQUENCE_LENGTH = 150.

The notebook follows the copied PDF constants directly and prepares padded train/test tensors before the CNN-LSTM model is built.

Results Capture

- MAX_NB_WORDS = 20000.

- MAX_SEQUENCE_LENGTH = 150.

tokenizer = tf.keras.preprocessing.text.Tokenizer(num_words=MAX_NB_WORDS)

tokenizer.fit_on_texts(X_train)

X_train_pad = tf.keras.preprocessing.sequence.pad_sequences(X_train_seq, maxlen=MAX_SEQUENCE_LENGTH)

11c

Train And Evaluate The CNN-LSTM Hybrid Model

Notebook section: Model build, fit, and evaluation cells

Requirement: Build the CNN-LSTM hybrid network, train it for 5 epochs with batch size 64, and report the test loss and accuracy.

The notebook exports training history, prediction samples, and the final evaluation summary for the copied CNN-LSTM workflow.

Results Capture

- Test accuracy is 0.92.

- Test loss is 0.2893.

model = tf.keras.Sequential([... tf.keras.layers.Conv1D(...), tf.keras.layers.LSTM(64), tf.keras.layers.Dense(2, activation='softmax')])

history = model.fit(...)

test_loss, test_accuracy = model.evaluate(X_test_pad, y_test_onehot, verbose=0)

Associated Artifact

Training History

Saved accuracy and loss curves for the CNN-LSTM run.

Data And Artifact Links

The links below open the copied project files, executed notebook, generated outputs, and staged evidence artifacts for this capstone.

Artifact

Project PDF

Open the copied project directions PDF for this capstone.

Artifact

Notebook Evidence

View the notebook as a readable page or download the original file.

Artifact

Requirements File

Open the generated requirements file for the website workflow.

Artifact

Original Spreadsheet Dataset

Open the original spreadsheet source staged for this capstone or download the raw file.

Artifact

JSON Output

Open the generated JSON artifact or download the original file.

Artifact

CSV Output

Open the generated CSV handoff or download the original file.

Artifact

Converted CSV

CSV export created from the staged workbook source.

Artifact

Training History CSV

Exported epoch-by-epoch loss and accuracy history.

Artifact

Prediction Samples CSV

Exported sample review predictions from the test split.

Artifact

Summary JSON

Structured summary of target distribution and evaluation metrics.

Interactive Neural Network Lab

This TensorFlow Playground embed is a concept sandbox for the representation-learning ideas that sit underneath the Session 11 sequence-model work. It does not load review text, embeddings, or the CNN-LSTM notebook; instead, it uses synthetic datasets to make hidden-layer capacity, nonlinearity, and regularization easier to understand before you return to the real text-model outputs.

What This Is

- The playground is not a sequence model and does not run the actual product-review or grammar workflow from the notebook.

- What it does show well is how a network transforms inputs into more separable internal representations before a final classifier makes a decision.

- That is the reason it belongs on this page: it helps explain the network behavior conceptually, even though the notebook evidence still lives in the real text-model artifacts.

How To Use It

- Choose a preset card above the playground based on the concept you want to see: basic separation, extra capacity, nonlinear features, or regularization.

- Click the preset Load button and then press the Play button inside the playground.

- Watch how the output region changes over epochs and how difficult patterns need richer internal structure to separate cleanly.

- Use the presets as analogies for what the deeper review-analysis model is doing with learned features under the hood.

What To Look For

- Decision Boundary Basics: expect the simplest demonstration of a network creating a usable class split.

- Hidden Layers On Spiral Data: expect the best illustration of why tangled patterns need more representational depth.

- ReLU On XOR: expect a compact example of feature interaction and nonlinearity.

- Regularization Under Noise: expect a useful analogy for keeping the model from overfitting noisy language signals.

Preset 1

Decision Boundary Basics

Starts with a compact tanh classifier on the circle dataset so you can see how a model gradually forms a usable separation. For the review-analysis capstone, this stands in for the final classifier stage that acts on learned text features, even though the playground uses 2D synthetic points instead of token sequences.

Preset 2

Hidden Layers On Spiral Data

Uses a deeper network on a harder spiral problem to illustrate why more capacity can help with tangled patterns. That is useful here as a conceptual bridge to sequence models, where the network needs richer internal representations before it can separate subtle language signals like sentiment or grammatical structure.

Preset 3

ReLU On XOR

Shows a ReLU network solving XOR, a classic example of a problem that needs nonlinear feature combinations. That directly supports the Session 11 story: text tasks often depend on interactions between features rather than any one token or score acting alone.

Preset 4

Regularization Under Noise

Uses noisy Gaussian data with regularization to show how the model avoids overreacting to messy inputs. In the review-analysis capstone, that is the best analogy here for handling noisy language patterns, uneven phrasing, and generalization beyond memorized training examples.

Loaded preset: Decision Boundary Basics

Use these presets to build intuition for how a network forms richer internal features before making a final decision. They are concept support only; the graded Session 11 evidence still comes from the notebook, screenshots, exported outputs, and walkthrough content tied to the real review-analysis workflow.

Colab Notebook

This section provides the notebook preview, launch link, and project file links.

The notebook opens in Google Colab when a launch URL is configured, and the project files and outputs remain available here on the site.

Embedded Notebook Preview

Cell 1 Markdown

Capstone Session 11

This notebook is generated from the copied Capstone_Session_11.pdf directions and the staged GrammarandProductReviews.xlsx dataset.

Cell 2 Markdown

Objective

Build the required CNN-LSTM hybrid model to classify product reviews as positive or negative using the staged workbook data.

Cell 3 Markdown

Source Note

The copied PDF names GrammarandProductReviews.csv, but the staged source file is GrammarandProductReviews.xlsx. This notebook loads the workbook and exports a converted CSV artifact for the website evidence flow before training the model.

Cell 4 Code · python

from pathlib import Path

import json

import os

import sys

from urllib.parse import quote

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import seaborn as sns

import tensorflow as tf

from IPython.display import display

from sklearn.metrics import accuracy_score

from sklearn.model_selection import train_test_split

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

tf.keras.utils.set_random_seed(42)

IS_COLAB = 'google.colab' in sys.modules

GITHUB_REPO_OWNER = 'FrancisBurnet'

GITHUB_REPO_NAME = 'francisburnet'

GITHUB_REPO_BRANCH = 'main'

CAPSTONE_ROOT = Path('Incremental Capstones/Deep Learning Specialization/Capstone Session 11')

WORKBOOK_FILENAME = 'GrammarandProductReviews.xlsx'

def build_github_url(relative_path: Path, media: bool = False) -> str:

encoded_path = quote(relative_path.as_posix(), safe='/')

if media:

return (

f"https://media.githubusercontent.com/media/{GITHUB_REPO_OWNER}/{GITHUB_REPO_NAME}/"

f"{GITHUB_REPO_BRANCH}/{encoded_path}"

)

return (

f"https://raw.githubusercontent.com/{GITHUB_REPO_OWNER}/{GITHUB_REPO_NAME}/"

f"{GITHUB_REPO_BRANCH}/{encoded_path}"

)

def resolve_capstone_dir() -> Path | None:

current = Path.cwd().resolve()

capstone_parts = CAPSTONE_ROOT.parts

for candidate in [current, *current.parents]:

if len(candidate.parts) >= len(capstone_parts) and candidate.parts[-len(capstone_parts):] == capstone_parts:

return candidate

nested_candidate = candidate / CAPSTONE_ROOT

if nested_candidate.exists():

return nested_candidate

return None

CAPSTONE_DIR = resolve_capstone_dir()

WORKBOOK_URL = build_github_url(CAPSTONE_ROOT / WORKBOOK_FILENAME)

if CAPSTONE_DIR is not None:

OUTPUT_ROOT = CAPSTONE_DIR

OUTPUT_MODE = 'permanent capstone outputs'

OUTPUT_DISPLAY = (CAPSTONE_ROOT / 'outputs').as_posix()

else:

runtime_root = Path('/content/capstone-session-11-runtime') if IS_COLAB else Path.cwd().resolve() / 'capstone-session-11-runtime'

OUTPUT_ROOT = runtime_root

OUTPUT_MODE = 'runtime scratch outputs; export final artifacts back into the capstone outputs folder'

OUTPUT_DISPLAY = 'capstone-session-11-runtime/outputs'

OUTPUTS_DIR = (OUTPUT_ROOT / 'outputs').resolve()

PLOTS_DIR = OUTPUTS_DIR / 'plots'

OUTPUTS_DIR.mkdir(parents=True, exist_ok=True)

PLOTS_DIR.mkdir(parents=True, exist_ok=True)

sns.set_theme(style='whitegrid')

print('Runtime:', 'Google Colab' if IS_COLAB else 'Notebook runtime')

print('Capstone artifact path:', CAPSTONE_ROOT.as_posix())

print('Workbook source:', WORKBOOK_URL)

print('Output mode:', OUTPUT_MODE)

print('Output target:', OUTPUT_DISPLAY)

Output

Runtime: Notebook runtime

Capstone artifact path: Incremental Capstones/Deep Learning Specialization/Capstone Session 11

Workbook source: https://media.githubusercontent.com/media/FrancisBurnet/francisburnet/main/Incremental%20Capstones/Deep%20Learning%20Specialization/Capstone%20Session%2011/GrammarandProductReviews.xlsx

Output mode: permanent capstone outputs

Output target: Incremental Capstones/Deep Learning Specialization/Capstone Session 11/outputs

Cell 5 Code · python

df = pd.read_excel(WORKBOOK_URL)

converted_csv_path = OUTPUTS_DIR / 'GrammarandProductReviews_converted.csv'

df.to_csv(converted_csv_path, index=False)

df['reviews.text'] = df['reviews.text'].fillna('').astype(str)

df['target'] = (df['reviews.rating'] > 3).astype(int)

display(df[['reviews.rating', 'reviews.text', 'target']].head())

print('Workbook source used:', WORKBOOK_URL)

print('Shape:', df.shape)

print('Target distribution:', df['target'].value_counts().to_dict())

Cell 6 Code · python

X_train, X_test, y_train, y_test = train_test_split(

df['reviews.text'],

df['target'],

test_size=0.2,

random_state=42,

stratify=df['target'],

)

MAX_NB_WORDS = 20000

MAX_SEQUENCE_LENGTH = 150

tokenizer = tf.keras.preprocessing.text.Tokenizer(num_words=MAX_NB_WORDS)

tokenizer.fit_on_texts(X_train)

X_train_seq = tokenizer.texts_to_sequences(X_train)

X_test_seq = tokenizer.texts_to_sequences(X_test)

X_train_pad = tf.keras.preprocessing.sequence.pad_sequences(X_train_seq, maxlen=MAX_SEQUENCE_LENGTH)

X_test_pad = tf.keras.preprocessing.sequence.pad_sequences(X_test_seq, maxlen=MAX_SEQUENCE_LENGTH)

y_train_onehot = tf.keras.utils.to_categorical(y_train, num_classes=2)

y_test_onehot = tf.keras.utils.to_categorical(y_test, num_classes=2)

print('Padded train shape:', X_train_pad.shape)

print('Padded test shape:', X_test_pad.shape)

Output

Padded train shape: (8000, 150)

Padded test shape: (2001, 150)

Cell 7 Code · python

model = tf.keras.Sequential([

tf.keras.layers.Input(shape=(MAX_SEQUENCE_LENGTH,), dtype='int32'),

tf.keras.layers.Embedding(input_dim=MAX_NB_WORDS, output_dim=50, input_length=MAX_SEQUENCE_LENGTH),

tf.keras.layers.Conv1D(64, 5, activation='relu'),

tf.keras.layers.MaxPooling1D(pool_size=5),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Conv1D(64, 5, activation='relu'),

tf.keras.layers.MaxPooling1D(pool_size=5),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.LSTM(64),

tf.keras.layers.Dense(2, activation='softmax'),

])

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

history = model.fit(

X_train_pad,

y_train_onehot,

epochs=5,

batch_size=64,

validation_split=0.2,

verbose=0,

)

pd.DataFrame(history.history).head()

Output

WARNING:tensorflow:TensorFlow GPU support is not available on native Windows for TensorFlow >= 2.11. Even if CUDA/cuDNN are installed, GPU will not be used. Please use WSL2 or the TensorFlow-DirectML plugin.

X:\SIMPLILEARN\.venv\Lib\site-packages\keras\src\layers\core\embedding.py:103: UserWarning: Argument `input_length` is deprecated. Just remove it.

warnings.warn(

accuracy loss val_accuracy val_loss

0 0.913125 0.309694 0.923750 0.259024

1 0.913125 0.277587 0.923750 0.237573

2 0.928125 0.210896 0.925625 0.226740

3 0.946406 0.154717 0.927500 0.251219

4 0.964375 0.107533 0.922500 0.295029

Cell 8 Code · python

test_probabilities = model.predict(X_test_pad, verbose=0)

test_predictions = np.argmax(test_probabilities, axis=1)

test_loss, test_accuracy = model.evaluate(X_test_pad, y_test_onehot, verbose=0)

print('Test loss:', round(float(test_loss), 4))

print('Test accuracy:', round(float(test_accuracy), 4))

print('Sklearn accuracy:', round(float(accuracy_score(y_test, test_predictions)), 4))

Output

Test loss: 0.2893

Test accuracy: 0.92

Sklearn accuracy: 0.92

Cell 9 Code · python

fig, axes = plt.subplots(1, 2, figsize=(12, 4))

axes[0].plot(history.history['accuracy'], label='train')

axes[0].plot(history.history['val_accuracy'], label='validation')

axes[0].set_title('Accuracy by Epoch')

axes[0].legend()

axes[1].plot(history.history['loss'], label='train')

axes[1].plot(history.history['val_loss'], label='validation')

axes[1].set_title('Loss by Epoch')

axes[1].legend()

fig.tight_layout()

fig.savefig(PLOTS_DIR / 'training_history.png', dpi=150)

plt.show()

plt.close(fig)

Output

<Figure size 1200x400 with 2 Axes>

Cell 10 Code · python

history_df = pd.DataFrame(history.history)

history_df.to_csv(OUTPUTS_DIR / 'session_11_training_history.csv', index=False)

prediction_samples = pd.DataFrame({

'review_text': X_test.reset_index(drop=True).head(100),

'actual': y_test.reset_index(drop=True).head(100),

'predicted': pd.Series(test_predictions).head(100),

})

prediction_samples.to_csv(OUTPUTS_DIR / 'session_11_prediction_samples.csv', index=False)

summary = {

'dataset_shape': list(df.shape),

'converted_csv_path': converted_csv_path.name,

'target_distribution': df['target'].value_counts().to_dict(),

'test_loss': float(test_loss),

'test_accuracy': float(test_accuracy),

'max_nb_words': MAX_NB_WORDS,

'max_sequence_length': MAX_SEQUENCE_LENGTH,

}

with open(OUTPUTS_DIR / 'session_11_summary.json', 'w', encoding='utf-8') as handle:

json.dump(summary, handle, indent=2)

summary

Output

{'dataset_shape': [10001, 26],

'converted_csv_path': 'GrammarandProductReviews_converted.csv',

'target_distribution': {1: 9154, 0: 847},

'test_loss': 0.2893032133579254,

'test_accuracy': 0.9200399518013,

'max_nb_words': 20000,

'max_sequence_length': 150}

Project Notes

- Workbook-to-CSV conversion and target creation.

- Tokenizer, sequence, and padding preparation.

- CNN-LSTM training-history export.

- Prediction-sample and evaluation-summary outputs.

Launch Controls

Notebook Launch

Open the matching notebook in Google Colab or review the tracked notebook source in GitHub.